The AI Executive Brief - Issue #22

Week of March 9, 2026

Silicon sovereignty, autonomous agents, and world models are the new operating environment. Here is what you need to know, and what to do about it.

Meta launched four in-house MTIA AI chips (300–500 series) on March 11, accelerating its break from Nvidia/AMD dependence for ranking, recommendations, and inference workloads.

AMI Labs (LeCun) secured Europe’s largest-ever seed round ($1.03 billion at a $3.5 billion valuation) to commercialize “world models” as a post-LLM paradigm for physical reasoning and planning.

Anthropic made 1-million-token context generally available at standard pricing for Claude Opus 4.6 and Sonnet 4.6, enabling persistent agents that act as autonomous digital coworkers.

Three strategic realities crystallized this week. Hardware is now a core differentiator, alternative architectures are attracting massive capital, and long-horizon agents have crossed the enterprise threshold.

SILICON SOVEREIGNTY

Meta’s MTIA Chip Family: The Full-Stack Moat Takes Shape

Meta revealed the MTIA 300, already in production for ranking and recommendation systems, alongside the 400, 450, and 500 chips rolling out through 2027, each optimized for generative AI inference and high-volume recommendation workloads. The strategy is deliberate, and it is to blend commercial GPUs with custom accelerators to achieve performance parity while slashing vendor dependency and capex intensity. This is a structural shift in how the world’s largest social platform funds its AI future.

“The real moat is no longer just model weights - it is the full stack: hardware, data flywheels, and inference economics.”

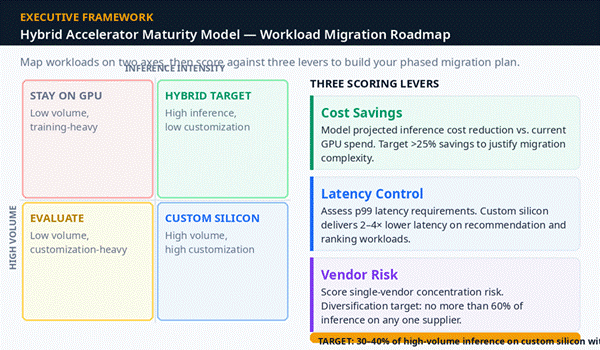

Business Implications & Competitive Dynamics Meta joins Google (TPUs) and Amazon (Trainium/Inferentia) in vertical silicon integration, transforming AI hardware from a commodity purchase into a strategic infrastructure decision. Nvidia retains a commanding lead but now faces sustained pricing pressure and volume erosion from its largest customers. We should expect accelerated custom-silicon roadmaps from Microsoft, Oracle, and enterprise clouds within the next 18 months. Early data suggests Meta is already realizing meaningful cost and latency advantages in recommendation systems that power billions of daily interactions.

The operational Risks & Value Creation Risks are getting real. Multi-year R&D cycles, yield challenges, and integration friction with existing GPU fleets. But the value case is compelling. Lower inference costs become critical as agent volumes explode. Faster feature iteration and negotiation leverage in Nvidia/AMD deals add further upside. Analysts project hyperscalers could redirect tens of billions annually from GPU spend into proprietary silicon, a reallocation that reshapes the entire semiconductor supply chain.

AGENTIC AI

Anthropic’s 1M-Token Context: The Agentic Tipping Point Has Arrived

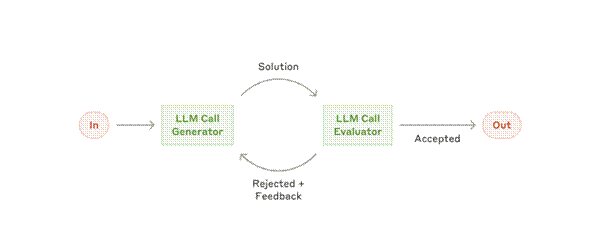

On March 13, Anthropic removed long-context premiums and lifted media limits, now 600 images or PDF pages, for Claude Opus 4.6 and Sonnet 4.6. Combined with native agentic capabilities already embedded in the Claude family, this move eliminates the last major friction point for persistent, codebase-wide, or document-heavy agents. What was once a premium capability reserved for deep-pocketed research teams is now a standard feature available to any enterprise.

“Early adopters report 10× workflow compression in software engineering and knowledge work. The bottleneck is no longer the model - it is your readiness to deploy it.”

Business Implications & Competitive Dynamics Enterprises can now run long-running agents on legal discovery, multi-million-line codebases, or longitudinal research without premium surcharges, directly competing with OpenAI’s GPT-5.4 computer-use agents and Google’s Workspace integrations. Competitive edge is shifting decisively from raw model intelligence to orchestration, memory management, and governance. Anthropic’s constitutional AI stance gives it a trust advantage in regulated sectors, healthcare, finance, and defense, where governance is not optional.

Operational Risks & Value Creation Context dilution remains a real risk. Models can still hallucinate over 1M tokens, and compute costs can explode without proper throttling. New attack surfaces for prompt injection in long sessions demand updated security postures. The value creation opportunity, however, is immediate. Autonomous code review, regulatory compliance agents, and multi-week research loops that were previously human-bound are now within reach.

BEYOND LLMs

AMI Labs & the $1 Billion Bet Against Transformers

Yann LeCun’s AMI Labs secured Europe’s largest-ever seed round, $1.03 billion at a $3.5 billion valuation, to commercialize “world models”. AI systems that reason about physics, causality, and real-world constraints rather than predicting the next token in a sequence. This is not a marginal improvement on existing LLMs. It is a fundamental architectural challenge to the transformer paradigm that has dominated AI investment for the past five years.

“Transformer scaling laws are not destiny. Causal, physics-grounded intelligence will matter more for real-world value creation in manufacturing, healthcare, and robotics.”

For Enterprise Leaders, World models represent the next frontier for AI in physical environments: autonomous vehicles, industrial robotics, surgical systems, and supply chain simulation. The $1B+ capital commitment signals that institutional investors believe the transformer era has a ceiling, and that the ceiling may arrive sooner than the market expects. For boards allocating AI R&D budgets, this is a signal to diversify architectural bets, not double down exclusively on LLM-based solutions.

Allocate 5–10% of your AI R&D budget to non-LLM paradigms. Monitor AMI Labs, DeepMind’s Gato successors, and embodied AI startups as bellwethers. Brief your board on world-model investments as part of your next architectural diversification review.

Leadership Action Playbook

1 Infrastructure Audit (Next 7 Days): Task your CIO to model 12-month capex scenarios assuming a 30% shift to custom silicon. Include Meta-style MTIA equivalents and split-inference options such as AWS Trainium combined with Cerebras. Identify which workloads are most exposed to Nvidia pricing risk.

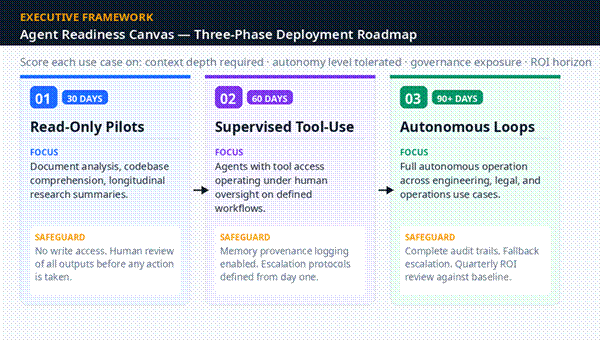

2 Agent Pilot Mandate (Next 14 Days): Launch three cross-functional pilots, engineering, legal, and operations, on 1M-context platforms. Require quantifiable KPIs from day one: hours saved, error rates, and compliance coverage. No pilot without a measurement framework.

3 Architectural Diversification Scan: (Next 30 Days) Brief the board on world-model investments using the AMI Labs round as a benchmark. Allocate 5–10% of AI R&D budget to non-LLM paradigms targeting robotics, simulation, and physical-world use cases.

4 Governance Refresh: (Ongoing) Update your AI usage policy to cover long-context data retention, agent audit trails, and military/dual-use restrictions. Explicitly address the Anthropic–Pentagon precedent and its implications for your sector’s regulatory environment.

5 Talent & Org Realignment: (Q2 Target) Identify and define “agent orchestrator” roles within your organization. Retrain 10% of knowledge workers on agent supervision within Q2. Track ROI quarterly against baseline productivity metrics to build the internal business case for further investment.

Executive Perspective

This week quietly closed the chapter on “AI as software” and opened the era of “AI as infrastructure and autonomous operator.” Meta’s chips prove that the real moat is no longer just model weights, it is the full stack: hardware, data flywheels, and inference economics. LeCun’s $1B+ bet on world models is a reminder that transformer scaling laws are not destiny; causal, physics-grounded intelligence will matter more for real-world value creation in manufacturing, healthcare, and robotics.

Anthropic’s context move, meanwhile, forces a leadership reckoning. Organizations that treat agents as productivity tools will capture incremental gains. Those that redesign processes, decision rights, and accountability structures around persistent AI coworkers will compound advantage at a fundamentally different rate. The deeper truth is that power is shifting from model providers to orchestrators. Executives who master hybrid silicon strategies, agent governance, and architectural pluralism will define the next decade.

The companies that hesitate, clinging to single-vendor GPU contracts or chat-only AI deployments, risk becoming the Blockbusters of the agentic age.